Epistemic Foundations of Game Theory

Non-cooperative game theory studies how individual players, or agents, make decisions in situations involving strategic interaction. In these situations, each player’s outcome depends not only on their own choices but also on the choices of the other players (see Ross 1997 [2024] for an overview). Epistemic game theory investigates how assumptions about the players’ beliefs and rationality influence their choices in strategic situations. This entry begins by discussing the role of uncertainty in strategic situations. It then introduces models of multi-agent knowledge and belief developed in the epistemic game theory and epistemic logic literature. Next, it examines how these models can be used to characterize classical game-theoretic solution concepts, focusing on the relationship between players’ rationality and their mutual beliefs about each other’s rationality. The entry concludes with a brief overview of other key topics in the epistemic game theory literature and suggestions for further reading.

- 1. The Epistemic View of Games

- 2. Game Models

- 3. Epistemic Characterizations of Solution Concepts

- 4. Additional Topics

- 5. Concluding Remarks

- Bibliography

- Academic Tools

- Other Internet Resources

- Related Entries

1. The Epistemic View of Games

This section provides an overview of the key ideas and concepts that are used throughout epistemic game theory.

1.1 Classical Game Theory

A game refers to an interactive situation involving a group of “self-interested” players, or agents. The defining feature of a game is that the players are engaged in an “interdependent decision problem” where the outcome of the game depends on all of the player’s choices (Schelling 1960). The mathematical description of a game includes at least the following components:

-

the players: in this entry, we only consider games with finitely many players and use \(N \) to denote the set of players in a game;

-

for each player \(i\in N\), a finite set of feasible options (typically called actions or strategies); and

-

for each player \(i\in N\), a utility function that represents \(i\)’s preference over the possible outcomes of the game. A standard assumption in game theory is that the outcomes of a game are the sequences of actions, one for each player. A sequence of actions is called a strategy profile. Identifying the outcomes of a game with strategy profiles reflects the key idea that the outcome of a game depends on the choices of all players.

Different mathematical representations of a game describe other features of the interactive situation, such as the order in which the players move.

Definition 1.1 (Game in Strategic Form) A game in strategic form is a tuple \(\langle N , (S_i)_{i\in N}, (u_i)_{i\in N }\rangle\) where \(N \) is a nonempty finite set of players, for each \(i\in N\), \(S_i\) is a nonempty set of actions for player \(i\), and for each \(i\in N\), \(u_i:\times_{i\in N } S_i\rightarrow\mathbb{R}\) is player \(i\)’s utility function, where \(\times_{i\in N} S_i\) is the set of strategy profiles.

A game in strategic form represents a situation in which all the players make a single decision simultaneously without stochastic moves.

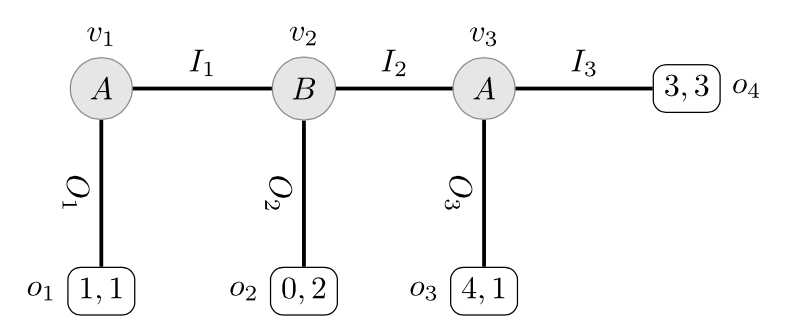

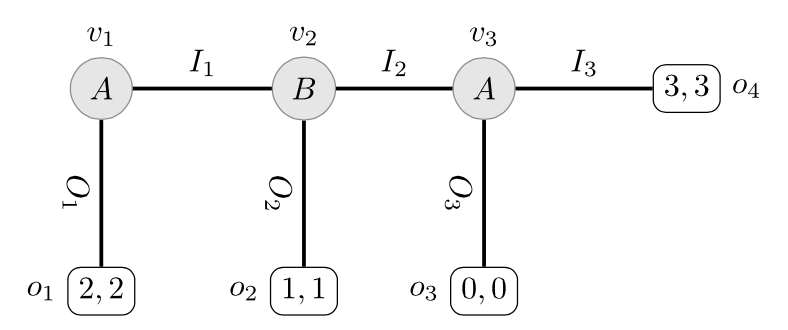

Figure 1 is an example of a game in strategic form. There are two players, Ann and Bob, and each has two available actions: \(N = \{\Ann, \Bob\}\), \(S_{\Ann} = \{u, d\}\) and \(S_{\Bob} = \{l, r\}\). The players’ utilities \(u_{\Ann}\) and \(u_{\Bob}\) are displayed in the cells of the matrix (the first number in the tuple is Ann’s utility and the second number is Bob’s utility). If Bob chooses \(l\), for instance, Ann prefers the outcome she would get by choosing \(u\) to the one she would get by choosing \(d\) since \(u_{\Ann}(u,l) > u_{\Ann}(d,l)\), but this preference is reversed if Bob chooses \(r\). In the game in Figure 1, there are 4 outcomes of the game corresponding to the 4 different strategy profiles \(\{(u,l), (u, r), (d, l), (d,r)\}\) (represented by each of the 4 cells in the matrix displayed in Figure 1).

| Bob | |||

|---|---|---|---|

| l | r | ||

| Ann | u | 1,1 | 0,0 |

| d | 0,0 | 1,1 | |

Figure 1: A coordination game

The game displayed in Figure 1 is called a pure coordination game: the players have a common interest in coordinating their choices on \((u, l)\) or \((d, r)\) and they are both indifferent about which way they coordinate their choices.

1.1.1 Solution Concepts and Mixed Strategies

A major focus of classical game theory research is studying and developing solution concepts. A solution concept associates a set of outcomes (i.e., a set of strategy profiles) with each game (from some fixed class of games). The most well-known solution concept is the Nash equilibrium, although we will encounter others in this entry. From a prescriptive point of view, a solution concept is a recommendation about what the players should do in a game, or about what outcomes can be expected assuming that the players choose rationally. From a predictive point of view, solution concepts describe what the players will actually do in a game.

Many solution concepts in game theory involve mixed strategies, where a player deliberately randomizes between their available actions rather than choosing one with certainty. The matching pennies game illustrates why mixed strategies are important: two players simultaneously show heads or tails, where one player wins if the coins match and the other wins if they differ. In this game, if your opponent can predict your choice, they will win by choosing accordingly. To prevent your opponent from gaining this advantage, you should make your choice truly unpredictable—even to yourself—by randomizing. A mixed strategy specifies the probability of choosing each action (e.g., 60% heads, 40% tails), selected from the infinitely many possible probability distributions over your available actions.

Formally, a mixed strategy for a player \(i\) is a probability over \(i\)’s available strategies. Let \(\Delta(X)\) denote the set of probability measures over the finite[1] set \(X\). Each \(m\in \Delta(S_i)\) is called a mixed strategy for player \(i\). If \(m\in\Delta(S_i)\) assigns probability 1 to a strategy \(s\in S_i\), then \(m\) is called a pure strategy (in this case, we write \(s\) for \(m\)).

Mixed strategies play an important role in game theory, especially when it comes to the existence of Nash equilibria. However, the interpretation of mixed strategies is controversial (see, for instance, Rubinstein 1991: 913). The main issue is whether players should be seen as genuinely randomizing—i.e., as delegating their choices to some randomization device—or whether mixed strategies capture something else, such as the opponents’ uncertainty about a player’s choice (cf. Zollman 2022 and Icard 2021). We return to the interpretation of mixed strategies in Section 3.3.2.

1.2 Epistemic Game Theory

Epistemic game theory emerged as a well-defined research program in the 1980s as a response to the equilibrium refinement program. The equilibrium refinement program (see van Damme 1983 for an overview) started with the observation that the Nash equilibrium (see Section 3.3 for a definition of Nash equilibrium) does not always provide a unique or compelling solution of a game. The equilibrium refinement program aims to identify more desirable solutions to a game by imposing additional criteria on the set of Nash equilibria. These refined equilibrium concepts were often based on intuitive judgments about what constituted rational plays in games. The development of epistemic game theory was motivated by a desire to formalize these intuitive judgments. Armbruster & Böge (1979) is arguably the earliest contribution to this approach, but other notable works include Spohn (1982), Bernheim (1984), Pearce (1984), and Tan & Werlang (1988), all of which present clear statements contrasting the epistemic program with the equilibrium refinement program. Consult Perea (2014b) for a more comprehensive discussion on the history of epistemic game theory.

One of the objectives of epistemic game theory is to characterize the behavior of rational players who mutually recognize each other’s rationality, where rationality is typically understood as in standard decision theory (see Briggs 2014 [2019]). This approach to the study of games is nicely encapsulated by the following:

There is no special concept of rationality for decision making in a situation where the outcomes depend on the actions of more than one agent. The acts of other agents are, like chance events, natural disasters and acts of God, just facts about an uncertain world that agents have beliefs and degrees of belief about. The utilities of other agents are relevant to an agent only as information that, together with beliefs about the rationality of those agents, helps to predict their actions. (Stalnaker 1996: 136)

A central component of an epistemic analysis of a game is a description of what the players know and believe about each other. In epistemic game theory, there are two main sources of uncertainty for the players:

-

Strategic uncertainty: What will the other players do?

-

Higher-order information: What are the other players thinking?

Of course, game theorists have studied uncertainty in games long before the emergence of epistemic game theory. This work has largely focused on two other sources of uncertainty in game:

-

Information about the structure of the game (called complete/incomplete information): Who else is involved in the game? What actions are available? What are the payoffs for each player? This type of uncertainty in games is briefly discussed in Section 1.4

-

Information about the play of the game (called perfect/imperfect information): Which moves have been played? This type of uncertainty in games is briefly discussed in Section 1.5.

These four sources of uncertainty in games are conceptually important, but not necessarily exhaustive nor mutually exclusive. John Harsanyi, for instance, argued that all uncertainty about the structure of the game—i.e., all possible incompleteness in information—can be reduced to uncertainty about the payoffs (Harsanyi 1967–68, cf. also Hu & Stuart 2002 and Lorini & Schwarzentruber 2010). In a similar vein, Kadane & Larkey argue that for a player

in a single-play game, all aspects of his opinion except his [opinion] about his opponent’s behavior are irrelevant, and can be ignored in the analysis by integrating them out of the joint opinion. (1982: 116)

1.3 Stages of Decision Making

It is standard in the game theory literature to distinguish three stages of the decision making process: ex ante, ex interim, and ex post. At one extreme is the ex ante stage where no decision has yet been made. The other extreme is the ex post stage where the choices of all players are openly disclosed. In between these two extremes is the ex interim stage where the players have made their decisions, but they are still uninformed about the choices of the other players.

These distinctions are not intended to be sharp. Rather, they describe various stages of information disclosure for the players during the decision-making process. At the ex ante stage, little is known except the structure of the game, who is taking part, and possibly (but not necessarily) something about the other players’ beliefs. At the ex post stage the game is basically over: all players have made their decision and these are now irrevocably out in the open. This does not mean that all uncertainty is removed as an agent may remain uncertain about what exactly the others were expecting of her. In between these two extremes lies a whole gradation of states of information disclosure that we loosely refer to as “the” ex interim stage. Common to these states of information disclosure is the fact that the agents have made a decision, although not necessarily an irrevocable one.

In this entry, we focus on the ex interim stage of decision making. This is in line with much of the literature on the epistemic foundations of game theory as it allows for a straightforward assessment of the players’ rationality given their expectations about what their opponents will do. Focusing on the ex interim stage does raise some interesting questions about how a player should react to learning that she did not choose “rationally” (cf. Stalnaker 1999, Section 4, and Skyrms 1990). Note that this question is different from the one of how players should revise their beliefs upon learning that others did not choose rationally. This second question is very relevant in games in which players choose sequentially, and will be addressed in Section 3.2.

1.4 Incomplete Information

A natural question to ask about any mathematical model of a game situation is how does the analysis change if the players are uncertain about some parameters of the model? This motivated Harsanyi’s seminal 1967–68 paper that introduced a model of beliefs for players with incomplete information about some aspect of a game. Building on these ideas, there is an extensive literature that studies Bayesian games, that is, games in which the players are uncertain about some aspect of the game. Consult Leyton-Brown & Shoham (2008: ch. 7) for a concise summary and pointers to the relevant literature. We discuss Harsanyi’s approach to modeling higher-order beliefs in Section 2.2. Following Brandenburger 2010 (Sections 4 and 5), we note two crucial differences between the study of Bayesian games and epistemic game theory.

-

In a Bayesian game, the only source of uncertainty for a player is the payoffs of the game, what the other players believe are the correct payoffs, what other players believe that the other players believe about the payoffs, and so on. The underlying idea is that the players’ (higher-order) beliefs about the payoffs in a game completely determine the (higher-order) beliefs about the other aspects of the game. In particular, if a player comes to know the payoffs of the other players, then that player is certain (and correct) about the possible (rational) choices of the other players.[2] As discussed in Section 1.2, in epistemic game theory, the models of beliefs focus on other sources of uncertainty for the players, such as strategic uncertainty.

-

In a Bayesian game, it is assumed that all players choose optimally given their information. That is, all players choose a strategy that maximizes their expected utility given their beliefs about the game, beliefs about what other players believe about the game, and so on. This means, in particular, that players do not entertain the possibility that their opponents may choose “irrationally”. In contrast, epistemic game theory models allow for the possibility that players may believe that the other players choose irrationally.

Note that these assumptions are not inherent in the formalism that Harsanyi used to represent the players’ beliefs in a game of incomplete information. Rather, they are conventions followed by Harsanyi and subsequent researchers studying Bayesian games.

1.5 Imperfect Information and Perfect Recall

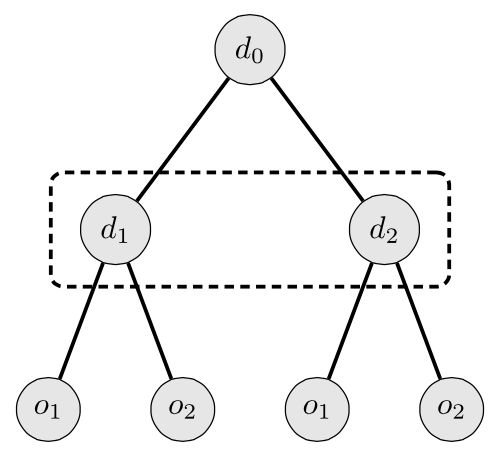

The defining feature of a game in strategic form is that the players choose their actions simultaneously. This is not an assumption about the precise timing of the players’ choices in the game, but rather an assumption about what the players know and believe about the choices of the other players in the game. More generally, a game in strategic form is an example of a game with imperfect information in which the players may not be perfectly informed about the moves of their opponents or the outcome of chance moves by nature. The choices of two players that do not move at the same time, but are not informed about the choice of the other player can be pictured as follows (where, for instance, the first player chooses at \(d_0\) and the second player chooses at \(d_1\) and \(d_2\), and the labels for the available actions are suppressed):

Figure 2 [An extended description of figure 2 is in the supplement.]

The interpretation is that the decision made at the first node (\(d_0\)) is forgotten or not observed, and so the second decision is made under uncertainty about whether the decision maker is at node \(d_1\) or \(d_2\). See Osborne (2004: ch. 9 & 10) for the general theory of games with imperfect information. Allowing imperfect information in a game raises an interesting question about whether players may be imperfectly informed about their own past decisions.

Harold Kuhn (1953) introduced the distinction between perfect and imperfect recall in games with imperfect information. The key idea is that players have perfect recall when they remember all of their own past moves. A standard assumption in game theory is that all players have perfect recall—i.e., they may be uncertain about previous choices of their opponents or nature, but they do remember all of their own moves. The perfect recall assumption has not only played an important role in game theory (Bonanno 2004; Kaneko & Kline 1995; Piccione & Rubinstein 1997a), but also in the study of logics of knowledge and time (Halpern, van der Meyden, & Vardi 2004), and in computational models of poker (Waugh et al. 2009).

As we noted in Section 1.3, there are different stages to the decision making process. Differences between these stages of decision-making are more pronounced in sequential decision problems in which decision makers choose at different moments in time. There are two ways to think about the decision making process in sequential decision problems. The first is to focus on the initial “planning stage”. Initially (before any moves are made), the decision makers settle on a plan specifying the (possibly random) move they will make at each of their choice nodes. Then, the players start making their respective moves following the plan which they have committed to without reconsidering their options at each choice node. Alternatively, the decision makers can make “local judgements” at each of their choice nodes, always choosing the best option given the information that is currently available to them. Kuhn’s Theorem (1953) shows that if players have perfect recall, then a plan is optimal if, and only if, it is locally optimal—that is, an optimal plan leads to the same sequence of choices that result from each decision maker choosing optimally at their decision node (see Maschler, Solan, & Zamir 2013: 219–250, for a proof of this classic result).

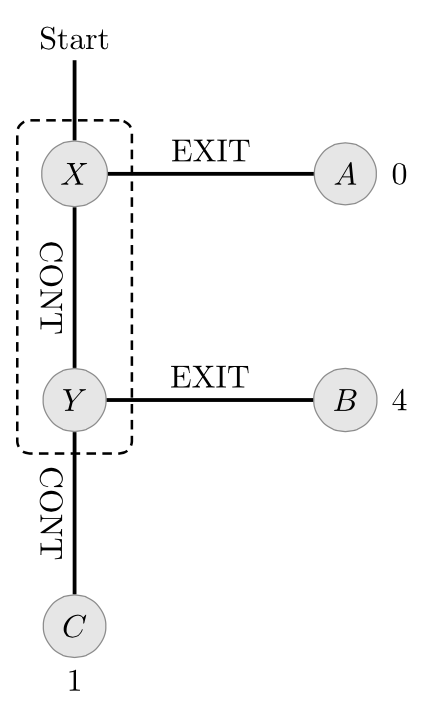

The assumption of perfect recall is crucial for Kuhn’s result. This is demonstrated by the so-called absent-minded driver’s problem of Piccione & Rubinstein (1997a):

An individual is sitting late at night in a bar planning his midnight trip home. In order to get home he has to take the highway and get off at the second exit. Turning at the first exit leads into a disastrous area (payoff 0). Turning at the second exit yields the highest reward (payoff 4). If he continues beyond the second exit, he cannot go back and at the end of the highway he will find a motel where he can spend the night (payoff 1). The driver is absentminded and is aware of this fact. At an intersection, he cannot tell whether it is the first or the second intersection and he cannot remember how many he has passed (one can make the situation more realistic by referring to the 17th intersection). While sitting at the bar, all he can do is to decide whether or not to exit at an intersection. (Piccione & Rubinstein 1997a: 7)

The decision tree for the absent-minded driver is depicted below:

Figure 3 [An extended description of figure 3 is in the supplement.]

This problem shows that there may be a conflict between what the decision maker commits to do while planning at the bar and what he thinks is best at the first intersection:

Planning stage: While planning his trip home at the bar, the decision maker is faced with a choice between “Continue; Continue” and “Exit”. Since he cannot distinguish between the two intersections, he cannot plan to “Exit” at the second intersection (he must plan the same behavior at both \(X\) and \(Y\)). Since “Exit” will lead to the worst outcome (with a payoff of 0), the optimal strategy is “Continue; Continue” with a guaranteed payoff of 1.

Action stage: When arriving at an intersection, the decision maker is faced with a local choice of either “Exit” or “Continue” (possibly followed by another decision). Now the decision maker knows that since he committed to the plan of choosing “Continue” at each intersection, it is possible that he is at the second intersection. Indeed, the decision maker concludes that he is at the first intersection with probability 1/2. But then, his expected payoff for “Exit” is \(1/2 * 4 + 1/2 * 0 = 2\), which is greater than the payoff guaranteed by following the strategy he previously committed to. Thus, he chooses to “Exit”.

This problem has been discussed by a number of different researchers.[3] It is beyond the scope of this article to discuss the details of the different analyses. An entire issue of Games and Economic Behavior (Volume 20, 1997) was devoted to the analysis of this problem. For a representative sampling of the approaches to this problem, see Aumann, Hart, & Perry (1997); Board (2003); Halpern (1997); Piccione & Rubinstein (1997b); Kline (2002); Levati, Uhl & Zultan (2014); Schwarz (2015); and Milano and Perea (2023).

2. Game Models

Researchers interested in the foundations of decision and game theory, epistemic and doxastic logic, and formal epistemology have developed many different formal models that can describe a variety of informational attitudes important for assessing the choices of players in a game. It is beyond the scope of this article to survey the details of these different models (cf. Genin & Huber 2020 [2022] and Weisberg 2015 [2021]). In this section, we introduce the two main types of models found in the Epistemic Game Theory literature: Epistemic-Probability Models, also called Aumann- or Kripke-structures, (Aumann 1999a; Fagin, Halpern, Moses, & Vardi 1995) and Type Spaces (Harsanyi 1967–68; Siniscalchi 2008).[4]

A model of a game represents both the strategies chosen by each player and the players’ opinions about the choices and opinions of the other players. The players’ opinions are described in terms of the players’ hard and soft informational attitudes (cf. van Benthem 2011). Hard informational attitudes capture what a player is certain of in a game. They are veridical, fully introspective and not revisable. At the ex interim stage, for instance, the players have hard information about their own choice. They “know” which strategy they chose, they know that they know this, and no new incoming information could make them change their opinion about which strategy they chose. As this phrasing suggests, “knowledge” is often used, in absence of better terminology, to describe this very strong type of informational attitude.[5] Soft informational attitudes are not necessarily veridical, not necessarily fully introspective and/or revisable in the presence of new information. As such, they come much closer to beliefs.[6] The game models discussed in this entry can be broadly described as “possible worlds models” which are typically associated with a propositional view of the players’ informational attitudes. Players have beliefs/knowledge about propositions, called events in the game-theory literature, represented as sets of possible worlds. These basic modeling choices are not uncontroversial, but such issues are not our concern in this entry.

2.1 Epistemic-Probability Models

We start with models that are familiar from the philosophical logic (van Benthem 2010) and computer science (Fagin, Halpern, et al. 1995) literatures. These models were introduced to game theory by Robert Aumann in his seminal paper Agreeing to Disagree (1976).

The starting point is a non-empty (finite) set \(S\) of strategy profiles from some underlying game[7] and a set \(W\) of possible worlds, or (epistemic) states. Each possible world is associated with a unique element of \(S\) (i.e., there is a function from \(W\) to \(S\), but this function need not be one–one or even onto). It is crucial for the analysis of rationality in games that different possible worlds may be associated with the same strategy profile in order to represent different states of information for a player.

2.1.1 Epistemic Models

Before giving the definition of an epistemic model for a game, we need some notation. Let \(W\) be a non-empty set, elements of which are called states, or possible worlds. A subset \(E\subseteq W\) is called an event or proposition. Given events \(E\subseteq W\) and \(F\subseteq W\), we use standard set-theoretic notation for intersection (\(E\cap F\), read “\(E\) and \(F\)”), union (\(E\cup F\), read “\(E\) or \(F\)”) and (relative) complement (\(-{E}\), read “not \(E\)”).

We say that an event \(E\subseteq W\) occurs at state \(w\) when \(w\in E\). Given a set \(X\), we write \(\wp(X)\) for the powerset of \(X\)—i.e., the set of all subsets of \(W\). A set \(\Pi\subseteq \wp(W)\) is called a partition on \(W\) when 1. the sets in \(\Pi\) are pairwise disjoint: for all \(E, F\in\Pi\), \(E\cap F=\varnothing\); and 2. the union of the sets in \(\Pi\) is \(W\): \(\bigcup\Pi = W\). If \(\Pi\) is a partition on \(W\) and \(w\in W\), then \(\Pi(w)\) is the unique element of \(\Pi\) that contains \(w\).

Definition 2.1 (Epistemic Model) Suppose that

\[G=\langle N, (S_i)_{i\in N}, (u_i)_{i\in N}\rangle\]is a game in strategic form. An epistemic model for \(G\) is a triple \(\langle W, (\Pi_i)_{i\in N },\sigma\rangle\), where \(W\) is a nonempty set, for each \(i\in N \), \(\Pi_i\) is a partition on \(W\), and \(\sigma:W\rightarrow \times_{i\in N} S_i\).

The function \(\sigma\) assigns to each state a unique outcome of the game. If \(\sigma(w) = \sigma(w')\) then the two worlds \(w\) and \(w'\) agree on the players’ choices in the game, but, crucially, the players may have different information at \(w\) and \(w'\) (i.e., \(w\) and \(w'\) may belong to different elements of \(\Pi_i\)). So, the possible worlds \(W\) are richer than the elements of \(S\) (more on this below).

Given a state \(w\in W\), the element of the partition \(\Pi_i\) containing \(w\), denoted \(\Pi_i(w)\), is called player \(i\)’s information set at \(w\). Following standard terminology, if \(\Pi_i(w)\subseteq E\), we say the player \(i\) knows that the event \(E\) holds at state \(w\). Formally, for each player \(i\) we define a knowledge function that assigns to every event \(E\) the event that the player \(i\) knows that \(E\):

Definition 2.2 (Knowledge Function) Let \(\cM=\langle W,(\Pi_i)_{i\in N },\sigma\rangle\) be an epistemic model for a game. The knowledge function for \(i\in N\) based on \(\cM\) is the function \(K_i:\wp(W)\rightarrow\wp(W)\) defined as follow: for all \(E\subseteq W\),

\[K_i(E)=\{w \mid \Pi_i(w)\subseteq E\}\]Remark 2.3 It is often convenient to use equivalence relations rather than partitions in an epistemic model. In this case, an epistemic model is a triple \(\langle W,(\sim_i)_{i\in N },\sigma \rangle\) where \(W\) and \(\sigma\) are as above and for each \(i\in N \), \(\sim_i\subseteq W\times W\) is a reflexive, transitive and symmetric relation on \(W\). For each \(w\in W\) let \([w]_i=\{v\in W \mid w\sim_i v\}\) be the equivalence class of \(w\). Since there is a correspondence between equivalence relations and partitions,[8] we will abuse notation and use \(\sim_i\) and \(\Pi_i\) interchangeably. In particular, an alternative definition of \(K_i\) is \(K_i(E)=\{w\mid [w]_i\subseteq E\}\). That is, \(w\in K_i(E)\) when \(E\) contains all states equivalent to \(w\) according to \(\sim_i\).

Partitions (or equivalence relations) are intended to represent the players’ hard information. It is well-known that the knowledge function based on an epistemic model satisfies the following properties (see Rendsvig & Symons 2019 [2021] for a discussion). For all players \(i\) and events \(E\) and \(F\):

- (Monotonicity) If \(E\subseteq F\), then \(K_i(E)\subseteq K_i(F)\)

- (Conjunction) \(K_i(E)\cap K_i(F) = K_i(E\cap F)\)

- (Truth) \(K_i(E)\subseteq E\)

- (Positive Introspection) \(K_i(E) \subseteq K_i(K_i(E))\)

- (Negative Introspection) \(-K_i(E) \subseteq K_i(-K_i(E))\)

Remark 2.4 The players’ beliefs can be represented by changing the properties of the relations associated with the players in an epistemic model of a game. For instance, a doxastic model of a game \(G\) is a tuple \(\langle W,(R_i)_{i\in N },\sigma\rangle\) where \(W\) and \(\sigma\) are defined as in Definition 2.1, and for each \(i\in N\), \(R_i\subseteq W\times W\) is serial (for all \(w\in W\) there is a \(v\in W\) such that \(w\mathrel{R_i} v\)), transitive (for all \(w,v,x\in W\), if \(w\mathrel{R_i} v\) and \(v\mathrel{R_i}x\), then \(w\mathrel{R_i} x\)) and Euclidean (for all \(w,v,x\in W\), if \(w\mathrel{R_i} v\) and \(w\mathrel{R_i}x\), then \(v\mathrel{R_i} x\)). For states \(w,v\in W\) and a player \(i\), \(w\mathrel{R_i} v\) means that \(v\) is a doxastic possibility for player \(i\) at state \(w\). Then, a player \(i\) believes an event \(E\subseteq W\) at state \(w\) when \(\{v\mid w\mathrel{R}_i v\}\subseteq E\). This notion of belief shares all of the properties of \(K_i\) listed above except Truth (this is replaced with a weaker assumption of “consistency” stating that players do not believe contradictions).

To illustrate an epistemic model of a game, consider the following coordination game between Ann (a) and Bob (b).

| b | |||

|---|---|---|---|

| l | r | ||

| a | u | 3,3 | 0,0 |

| d | 0,0 | 1,1 | |

Figure 4: A strategic coordination game between two players a and b.

The set of strategy profiles is \(S=\{(u,l),(d,l),(u,r),(d,l)\}\) and the set of players is \(N =\{a, b\}\). To complete the description of the game model we must specify the set \(W\) of possible worlds and a partition on \(W\).

For simplicity, we start by assuming \(W=S\), so there is exactly one possible world corresponding to each strategy profile. There are many different partitions on \(W\) for the players that we can use to complete the description of this simple epistemic model. However, not all of the partitions are appropriate for analyzing the ex interim stage of the decision-making process. For example, suppose \(\Pi_{a}=\Pi_{b}=\{W\}\) and consider the event \(U=\{(u,l),(u,r)\}\) representing the event that Ann chooses \(u\). Notice that \(K_a(U)=\emptyset\) since for all \(w\in W\), \(\Pi_a(w)\not\subseteq U\), so there is no state where Ann knows that she chooses \(u\). This means that this model is appropriate for representing the ex ante rather than the ex interim stage of decision-making in the game. This is easily fixed with an additional assumption:

An epistemic model of a game \(\langle W, (\Pi_i)_{i\in N },\sigma \rangle\) is an ex interim epistemic model if for all \(i\in N \) and \(w,v\in W\), if \(v\in\Pi_i(w)\) then \(\sigma_i(w)=\sigma_i(v)\)

where \(\sigma_i(w)\) is player \(i\)’s component of the strategy profile \(s\in S\) assigned to \(w\) by \(\sigma\). An example of an ex interim epistemic model with states \(W\) is:

-

\(\Pi_a=\{\{(u,l),(u,r)\},\{(d,l),(d,r)\}\}\) and

-

\(\Pi_b=\{\{(u,l),(d,l)\},\{(u,r),(d,r)\}\}\).

Note that this simply reinterprets the game matrix in Figure 4 as an epistemic model where the rows are a’s information sets and the columns are b’s information sets.

Unless otherwise stated, we assume that our epistemic models are ex interim. The class of ex interim epistemic models is very rich with models describing the (hard) information the players have about their own choices, the (possible) choices of the other players and the players’ higher-order (hard) information (e.g., “a knows that b knows that…”).

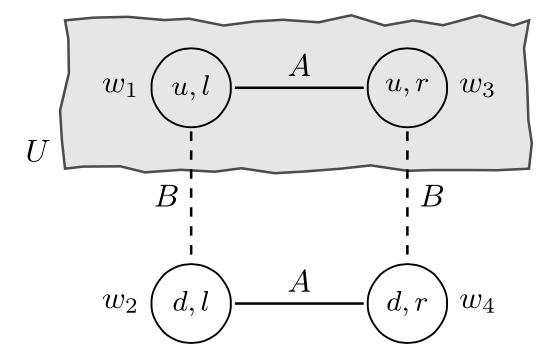

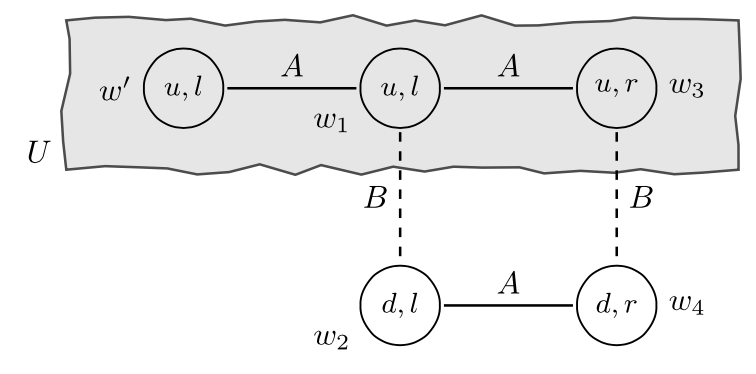

It is standard to use the following diagrammatic representation of an epistemic model to ease exposition. Suppose that \(W=\{w_1, w_2, w_3, w_4\}\) and \(\sigma\) is the function where \(\sigma(w_1)=(u, l), \sigma(w_2)=(d, l), \sigma(w_3)=(u, r),\) and \(\sigma(w_4)=(d, r)\). Furthermore, the partitions for the players are \(\Pi_a=\{\{w_1, w_3\}, \{w_2, w_4\}\}\) and \(\Pi_b=\{\{w_1, w_2\}, \{w_3, w_4\}\}\). This epistemic model is depicted in Figure 5 where the nodes represent the states with the strategy profile associated with that state displayed inside the node and there is a (undirected) edge between states \(w_i\) and \(w_j\) when \(w_i\) and \(w_j\) are in the same partition cell. We use a solid line labeled with a for a’s partition and a dashed line labeled with b for b’s partition. Note that reflexive edges and edges that can be inferred by transitivity are typically not represented (so, for instance, there is no edge between \(w_1\) and itself). The event \(U=\{w_1,w_3\}\) representing the proposition that “a chooses strategy \(u\)” is the shaded gray region.

Figure 5 [An extended description of figure 5 is in the supplement.]

Notice that the following events are true at all states:

-

\(-K_b(U) = W\): at each state, “b does not know that a chose action \(u\)”.

-

\(K_b(K_a(U)\cup K_a(-U)) = W\): at each state, “b knows that a knows whether she has chosen action \(u\)”.

-

\(K_a(-K_b(U))=W\): at each state, “a knows that b does not know that she has chosen action \(u\)”.

In particular, these events are true at state \(w_1\) where a has chosen \(u\) (i.e., \(w_1\in U\)). The first event makes sense given the assumptions about the available information at the ex interim stage: each player knows their own choice but, in general, not the other players’ choices. The second event is a natural assumption about b’s information about a’s choice in the game: b has the information that a has, in fact, settled on some choice. But what reason does a have to conclude that b does not know she has chosen \(u\) (the third event)? This is a substantive assumption about what a knows about what b expects her to do. Indeed, in certain contexts, a may have very good reasons to think it is possible that b actually knows that she chose \(u\). There is an ex interim epistemic model where this event (\(-K_a(-K_b(U))\)) is true at \(w_1\), but this requires adding an additional element to \(W\):

Figure 6 [An extended description of figure 6 is in the supplement.]

Notice that since \(\Pi_b(w')=\{w'\}\subseteq U\) we have \(w'\in K_b(U)\). That is, b knows that a chooses \(u\) at state \(w'\). Finally, a simple calculation shows that \(w_1\in -K_a(-K_b(U))\), as desired. Of course, there are other substantive assumptions built-in to this new model (e.g., at \(w_1\), b knows that a does not know he will choose \(l\)) which may require additional modifications (cf. Roy & Pacuit 2013). This raises a number of interesting conceptual and technical issues which we discuss in Section 2.4.

2.1.2 Adding Beliefs

There are different ways to extend an epistemic model of a game with the players’ beliefs. We start by sketching an approach motivated by research on belief revision (see van Benthem 2011; Baltag & Renne 2016; and Baltag & Smets 2006 for an overview).

An epistemic-plausibility model of a game \(G = \langle N, (S_i)_{i\in N}, (u_i)_{i\in N}\rangle\) is a tuple \(\langle W, (\Pi_i)_{i\in N }, (\succeq_i)_{i\in N },\sigma\rangle\) where \(W\) is a nonempty finite set of states, \(\langle W, (\Pi_i)_{i\in N },\sigma\rangle\) is an epistemic model of \(G\) and for each \(i\in N \), \(\succeq_i\) is a reflexive and transitive relation on \(W\) satisfying the following properties[9], for all \(w,v\in W\),

- (plausibility implies possibility) if \(v\succeq_i w\) then \(v\in \Pi_i(w)\), and

- (locally-connected) if \(v\in \Pi_i(w)\) then either \(w\succeq_i v\) or \(v\succeq_i w\).

The plausibility ordering not only describes the players’ beliefs, but also how the players’ revise their beliefs in the presence of new information (see Section 4.3 of Baltag & Renne 2016 for a discussion). Different types of belief operators can be defined using the plausibility ordering. We first need some notation. First, for an event \(E\subseteq W\), let

\[\Max_{\succeq_i}(E)=\{v\in W\ |\ v\succeq_i w \text{ for all \(w\in E\) }\}\]denote the set of maximal elements of \(E\) according to \(\succeq_i\). Second, the plausibility relation \(\succeq_i\) can be lifted to subsets of \(W\) as follows: \(X\succeq_i Y\text{ if, and only if, \(x\succeq_i y\) for all \(x\in X\) and \(y\in Y\).}\)

-

Belief: For any event \(E\subseteq W\), let

\(B_i(E)=\{w \mid \Max_{\succeq_i}(\Pi_i(w))\subseteq E\}\)

This is the usual notion of belief which satisfies the standard properties discussed above (e.g., consistency and positive and negative introspection).

-

Robust Belief: For any event \(E\subseteq W\), let

\(\textit{RB}_i(E)=\{w \mid v\in E, \mbox{ for all } v \mbox{ with } v \succeq_i w\}\)

So, \(E\) is robustly believed if it is true in all worlds at least as plausible then the current world. This stronger notion of belief has also been called certainty by some authors (cf. Leyton-Brown & Shoham 2008: sec. 13.7).

-

Strong Belief: For any event \(E\subseteq W\), let

\[ \begin{multline} \SB_i(E)=\{w \mid E \cap \Pi_i(w) \neq \emptyset \text{ and } \\ (E \cap \Pi_i(w)) \succeq_{i} (- E \cap \Pi_i(w))\} \end{multline} \]So, \(E\) is strongly believed provided it is epistemically possible and player \(i\) considers any state in \(E\) more plausible than any state in the complement of \(E\).

-

Conditional Belief: For events \(E, F\subseteq W\), let

\[B_i^F(E)=\{w \mid \Max_{\succeq_i}(F\cap \Pi_i(w))\subseteq E\} \]So, ‘\(B_i^F\)’ encodes what agent \(i\) will believe upon receiving (possibly misleading) evidence that \(F\) is true.

The standard approach in epistemic game theory is to represent the players’ beliefs using probabilities rather than using plausibility orderings to represent the players’ qualitative beliefs:

Definition 2.5 (Epistemic-Probability Model) Suppose that

\[G = \langle N, (S_i)_{i\in N}, (u_i)_{i\in N}\rangle\]is a game in strategic form. An epistemic-probabilistic model for \(G\) is a tuple

\[ \langle W,(\Pi_i)_{i\in N },(P_i)_{i\in N },\sigma\rangle \]where \(W\) is a nonempty finite set of states, \(\langle W,(\Pi_i)_{i\in N },\sigma\rangle\) is an epistemic model for \(G\) and for each \(i\in N\), \(P_i:W\rightarrow \Delta(W)\) assigns a probability measure[10] to each element of \(W\) satisfying the following two assumptions:

-

For all \(v\in W\), if \(P_i(w)(v)>0\) then \(P_i(w)=P_i(v)\); and

-

For all \(v\not\in\Pi_i(w)\), \(P_i(w)(v)=0\).

To simplify notation, for each \(i\in N\) and \(w\in W\), write \(p_i^w\) for \(P_i(w)\).

Property 1 says that if \(i\) assigns a non-zero probability to state \(v\) at state \(w\) then the player is assigned the same probability measure at both \(w\) and \(v\). This means that we can view \(P_i\) as assigning a probability measure to each of \(i\)’s information cells. The second property says that any probability measure assigned to an information cell must assign probability zero to all states outside that information cell.

In many applications, it is useful to view the player’s probability measures associate with each information cell as arising from a single probability measure through conditionalization. For each \(i\in N \), player \(i\)’s (subjective) prior probability is an element of \(p_i\in\Delta(W)\). Then, an epistemic-probability model is defined by specifying for each \(i\in N \), (1) a prior probability \(p_i\in\Delta(W)\) and (2) a partition \(\Pi_i\) on \(W\) such that for each \(w\in W\), \(p_i(\Pi_i(w)) > 0\). The probability measures for each \(i\in N \) associated with each possible world are then defined as follows:

\[ P_i(w)(\cdot)= p_i(\cdot \mid \Pi_i(w)) = \frac{p_i(\cdot\cap \Pi_i(w))}{p_i(\Pi_i(w))} \]Of course, the side condition that for each \(w\in W\), \(p_i(\Pi_i(w)) > 0\) is important since we cannot divide by zero—this will be discussed in more detail in later sections.

A key observation (assuming that \(W\) is finite) is that for any epistemic-probability model, for each player, there is a prior probability (possibly different ones for different players) that generates the model as described above. This means that an epistemic-probability model assumes that the players’ beliefs about the possible outcome of the game are fixed ex ante with the ex interim beliefs derived through conditionalization on the player’s hard information. See Morris 1995 for an extensive discussion of the situation when there is a common prior (i.e., all players have the same prior).

As above we can define belief operators, this time specifying the precise degree to which an agent believes an event:

-

Probabilistic belief: For each \(r\in [0,1]\), let

\[(B_i^r(E)=\{w \mid p_i^w(E)\ge r\}\] -

Full belief: \(B_i(E)=B_i^1(E)=\{w \mid p_i^w(E)=1\}\)

\[ B_i(E)=\{w \mid \text{for all \(v\), if \(p_i^w(v)>0\) then \(v\in E\)}\} \]

So, full belief is defined as belief with probability 1. This is a standard assumption in this literature despite a number of well-known conceptual difficulties (see Genin & Huber 2020 [2022] for an extensive discussion of this and related issues). It is sometimes useful to work with the following alternative characterization of full-belief (giving it a more “modal” flavor): Player \(i\) believes \(E\) at state \(w\) provided that the support of \(i\)’s probability at \(w\) is a subset of \(E\). That is,

See Fagin, Halpern, & Megiddo (1990); Heifetz & Mongin (2001); and Zhou (2010) for a logical analyses of these belief operators.

We conclude this section with an example of an epistemic-probability model. Recall the coordination game depicted in Figure 4: there are two actions for player Ann (a), \(u\) and \(d\), and two actions for Bob (b), \(l\) and \(r\). The set of strategy profiles is \(\{(u,l), (u,r), (d,l), (d,r)\}\). The preferences (or utilities) of the players are not important at this stage since we are only interested in describing the players’ knowledge and beliefs.

Figure 7 [An extended description of figure 7 is in the supplement.]

The solid lines represent Ann’s partition and the dashed lines represent Bob’s partition. We further assume there is a common prior \(p: W\rightarrow [0, 1]\) with the probabilities assigned to each state written to the right of the state (e.g., \(p(w_2)=\frac{1}{8}\)). Let \(E=\{w_2,w_5,w_6\}\) be an event. Then, we have

-

\(B_a^{\frac{1}{2}}(E)=\{w \mid p(E \mid \Pi_a(w))=\frac{p(E\cap\Pi_a(w))}{p(\Pi_a(w))}\geq\frac{1}{2}\}\,=\) \(\{w_1,w_2,w_3, w_4, w_5, w_6\}\): “Ann assigns probability at least 1/2 to the event \(E\) given her information at all states”.

-

\(B_b(E)=B_b^1(E)=\{w_2,w_5,w_3,w_6\}\). In particular, note that at \(w_6\), the agent believes (with probability 1) that \(E\) is true, but does not know that \(E\) is true as \(\Pi_b(w_6)\not\subseteq E\). So, there is a distinction between states the agent considers possible (given their “hard information”) and states to which players assign a non-zero probability.

-

Let \(U=\{w_1,w_2,w_3\}\) be the event that Ann plays \(u\) and \(L=\{w_1,w_4\}\) the event that Bob plays \(l\). Then, we have

-

\(K_a(U)=U\) and \(K_b(L)=L\): Both Ann and Bob know that strategy they have chosen;

-

\(B_a^{\frac{1}{2}}(L)=U\): At all states where Ann plays \(u\), Ann believes that Bob plays \(L\) with probability 1/2; and

-

\(B_a(B_b^{\frac{1}{2}}(U))=\{w_1,w_2,w_3\}=U\): At all states where Ann plays \(u\), she believes that Bob believes with probability 1/2 that she is playing \(u\).

-

2.1.3 Rational Choice in Epistemic-Probability Models

Each state in an epistemic-probability model of a game describes the players’ choices and each player’s belief about the other players’ choices. Suppose that \(\langle N, (S_i)_{i\in N}, (u_i)_{i\in N}\rangle\) is a game in strategic form and \(\langle W, (\Pi_i)_{i\in N}, (P_i)_{i\in N}, \sigma\rangle\) is an epistemic-probability model for \(G\). For each strategy profile \(s\in S\), let \(s_i\) be the action chosen by \(i\) in \(s\) (i.e., \(s_i\) is the ith component of \(s\)), and \(s_{-i}\) is the tuple of the choices in \(s\) of all players except \(i\). Each strategy profile \(s\) is associated with the following events:

- \([s_i]=\{w\mid \sigma(w)_i = s_i\}\) is the event that player \(i\) chooses \(s_i\).

- \([s_{-i}]=\{w\mid \sigma(w)_{-i} = s_{-i}\}=\bigcap_{j\ne i} [s_j]\) is the event that all the players except \(i\) choose their action in \(s_{-i}\).

- \([s] = \{w\mid \sigma(w)=s\} = \bigcap_i [s_i]\) is the event that the outcome of the game is \(s\).

For each player \(i\), let \(S_{-i} = \times_{j\ne i} S_j\) be all the possible combinations of choices of all players except \(i\). For each \(i\in N\) and each \(s_{-i}\in S_{-i}\), \(p_i^w([s_{-i}])\) is the probability that player \(i\) assigns to the other players choosing their strategies in \(s_{-i}\). Then, the expected utility of player \(i\)’s choice in state \(w\), denoted \(\EU(i,w)\), is:

\[\sum_{s_{-i} \in S_{-i}} p_i^w([s_{-i}])u(\sigma(w)_i, s_{-i})\]where \(u(\sigma(w)_i, s_{-i})\) is the utility assigned to the strategy profile \(s^\prime\in S\) where \(s^\prime_i=\sigma(w)_i\) and \(s^\prime_{-i}=s_{-i}\). Player \(i\) is rational in state \(w\) when the expected utility of \(i\)’s choice in state \(w\) is maximal with respect to \(i\)’s other available choices (cf. Briggs 2014 [2019]). That is, \(i\) is rational in \(w\) when for all \(s'\in S_i\),

\[\sum_{s_{-i} \in S_{-i}} p_i^w([s_{-i}])u_i(\sigma(w)_i, s_{-i})\ge\sum_{s_{-i} \in S_{-i}} p_i^w([s_{-i}])u_i(s', s_{-i}) \]Then, for each \(i\in N\), the event \(\Rat_i = \{w \mid \mbox{ \(i\) is rational in state } w\}\) is the event that player \(i\) is rational, and \(\Rat=\bigcap_{i\in N } \Rat_i\) is the event that all players are rational.

To illustrate the above definitions, consider the epistemic-probability model depicted in Figure 7 for the game depicted in Figure 4. The profile \((u, r)\) corresponds to the following events:

- \([(u,r)] = \{w_2, w_3\}\)

- \([(u,r)_a] = [u] = \{w_1, w_2, w_3\}\)

- \([(u,r)_{-a}] = [(u,r)_b]= [r] = \{w_2, w_3, w_5, w_6\}\)

For \(w\in \{w_1, w_2, w_3\}\), we have the following:

\[ \begin{align*} \EU(\sigma_a(w),w) &= \EU(u, w)\\ & = p_a^{w}([l])u_a(u,l) + p_a^{w}([r])u_a(u,r) \\ &= \frac{1}{2} \cdot 3 + \frac{1}{2} \cdot 0 \\ &= \frac{3}{2} \end{align*} \] \[ \begin{align*} \EU(d,w) &= p_a^{w}([l])u_a(d,l) + p_a^{w}([r])u_a(d,r) \\ &= \frac{1}{2}\cdot 0 + \frac{1}{2} \cdot 1 \\ &= \frac{1}{2} \end{align*} \]For \(w\in \{w_4, w_5, w_6\}\), we have the following:

\[ \begin{align*} \EU(\sigma_a(w),w) &= \EU(d, w)\\ & = p_a^{w}([l])u_a(d,l) + p_a^{w}([r])u_a(d,r) \\ &= \frac{1}{6} \cdot 0 +\frac{5}{6} \cdot 1 \\ &= \frac{5}{6} \end{align*} \] \[ \begin{align*} \EU(u,w) &= p_a^{w}([l])u_a(u,l) + p_a^{w}([r])u_a(u,r) \\ &= \frac{1}{6} \cdot 3 +\frac{5}{6} \cdot 0 \\ &= \frac{1}{2} \end{align*} \]Thus, we have that

\[\Rat_a = \{w_1, w_2, w_3, w_4, w_5, w_6\}.\]A similar calculation shows that

\[\Rat_b = \{w_1, w_4, w_3, w_6\}.\]Thus,

\[\Rat = \{w_1, w_4, w_3, w_6\}.\]2.2 Type Spaces

Type Spaces were initially introduced in Harsanyi’s seminal three-part paper, “Games with Incomplete Information Played by ‘Bayesian’ Players” (1967–68). Harsanyi aimed to develop a model for games in which players

may lack full information about other players’ or even their own payoff functions, about the physical facilities and strategies available to other players or even to themselves, about the amount of information the other players have about various aspects of the game situation, etc. (1967: 163)

The primary issue Harsanyi sought to address was the seemingly “infinite regress of reciprocal expectations on the part of the players” (1967: 163). Harsanyi’s proposed solution involved assigning each player a “type”, which represents their private information concerning any factors that could impact their beliefs about the game’s payoffs and the types of other players. The main idea is that each player’s type generates a hierarchy of beliefs describing what that player believes, believes that the other players’ believe, and so on. Thus, a Type Space is a compact representation of players’ belief hierarchies associated for a game.

Consult Siniscalchi (2008) for an overview of Type Spaces and Myerson (2004) for some historical remarks about Harsanyi’s (1967–68) groundbreaking contribution.

2.2.1 Belief Hierarchies

A key component of an epistemic analysis of a game is the players’ hierarchies of beliefs. For a game with a set \(S\) of strategy profiles, a hierarchy of beliefs for player \(i\) is an infinite sequence of probability measures \((p_i^1, p_i^2, p_i^3, \ldots)\) where, for each \(k\ge 1\), \(p_i^k\) represents player i’s kth-order belief. The formal definition of a hierarchy of belief is easiest to explain for two players. Consider a game for two players a and b with \(S_a\) the strategy set for a and \(S_b\) the strategy set for b. Recall that \(S_{-b}=S_a\) and \(S_{-a}=S_b\). Then, for \(i\in\{a,b\}\), player \(i\)’s first- and second-order beliefs are defined as follows:

- \(i\)’s first-order beliefs are about what the other player is going to do in the game. Thus, \(p_i^1\) is a probability measure over the strategies of the other player: \(p_i^1\in \Delta(S_{-i})\).

- \(i\)’s second-order beliefs are about what the other player is going to do in the game and what the other player believes that \(i\) is going to do in the game. Since the set \(S_{-i}\times \Delta(S_{i})\) contains all pairs consisting of a choice for the other player and a first-order belief for the other player, we have that \(p_i^2\in \Delta(S_{-i}\times \Delta(S_i))\).

Continuing in this manner, player \(i\)’s kth-order belief \(p_i^k\) is defined as follows. For each \(i\in\{a,b\}\), for all \(k\ge 1\), recursively define sets \(X_{-i}^{k}\) as follows: let \(X_{-a}^0 = S_{b}\) and \(X_{-b}^0 = S_{a}\) and for \(k\ge 1\), set

\[X_{-i}^k = X_{-i}^{k-1} \times \Delta(X_i^{k-1}),\]where \(X_{i}^{k-1}\) is the domain of \(-i\)’s \((k-1)\)-order beliefs (e.g., \(S_a\) is the domain of b’s first-order beliefs). Then, we have that \(p_i^k\in \Delta(X_{-i}^{k})\). Thus, the set of all hierarchies of beliefs for player \(i\) is the set \(\times_{k\ge 0} \Delta(X_{-i}^k)\).

It is important to keep in mind the following points regarding the players hierarchies of beliefs:

- In the previous section when defining an epistemic-probability model, we did not define a \(\sigma\)-algebra or mention any other mathematical assumption needed to formally define probability measures. This is because we have restricted attention to finite games and epistemic-probability models with a finite set of states. However, even if \(X\) is finite, the set \(\Delta(X)\) of probability measures over \(X\) is infinite (indeed, it is uncountable). Thus, some care is needed to formally define kth-order beliefs for \(k\geq 2\). Consult Billingsley (1999) for the mathematical details.

- A hierarchy of beliefs does not represent uncertainty about the players’ own choices or beliefs. Note that \(p_i^2\in \Delta(S_{-i}\times \Delta(S_i))\) and so \(p_i^2\) does assign probability to elements of \(\Delta(S_i)\). However, the elements of \(\Delta(S_i)\) are interpreted as beliefs of player \(-i\) rather than a mixed strategy for player \(i\) or beliefs of player \(i\) about her own strategy (cf. Section 3.3.2). The standard assumption in epistemic game theory is that the players are certain of their own strategy and are fully introspective in the sense that they are certain of their own beliefs.

- There are two ways to define a player’s kth-order belief given a hierarchy of belief. For example, consider \(p_i^2\in \Delta(S_{-i}\times \Delta(S_i))\). This probability measure generates a probability over \(S_{-i}\) by taking the marginal with respect to \(S_{-i}\) (i.e., by finding the expectation of each element of \(S_{-i}\) with respect to \(\Delta(S_{-i})\)), denoted \(\textrm{marg}_{S_{-i}} p_i^2\) (i.e., \(\textrm{marg}_{S_{-i}} p_i^2\in \Delta(S_{-i})\)). A natural assumption is to require that \(\textrm{marg}_{S_{-i}} p_i^2\) and \(p_i^1\) are the same probability measure. More generally, say that a hierarchy of belief \((p_i^1, p_i^2, \ldots)\) is coherent when for all \(k\geq 2\), \(\textrm{marg}_{X^{k-1}_{-i}}p_i^k = p_i^{k-1}\).

- Given the previous comment, one may wonder why \(p_i^2\) is defined to be a probability over the \(S_{-i}\times \Delta(S_i)\) rather than using the simpler definition \(p_i^2\in \Delta(\Delta(S_i))\). The main observation is that in order to assess the probability that \(i\) assigns to player \(-i\) being rational, we need \(i\)’s probability that \(-i\) will choose a strategy when \(-i\) has such-and-such belief. It is not enough to represent \(i\)’s belief about what \(-i\) is going to do (an element of \(\Delta(S_{-i})\)) and separately what \(i\) believes that \(-i\) believes that \(i\) is going to do (an element of \(\Delta(\Delta(S_i))\)). A belief about rationality involves beliefs about the correct matching of choices with beliefs.

Rather than using a set of hierarchies of beliefs as a model of a game, much of the epistemic game theory literature uses a model, called a type space, introduced by Harsanyi in his seminal paper (1967–68) to represent the players’ hierarchies of beliefs (consult Brandenburger & Dekel 1993; Mertens & Zamir 1985; and Perea & Kets 2016, for an extended discussion about representing hierarchies of beliefs in Harsanyi’s model).

2.2.2 Qualitative Type Spaces

We start by defining a non-probabilistic version of a Type Space.

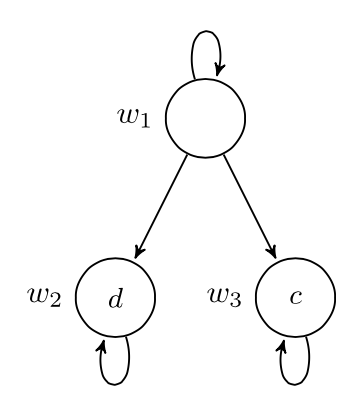

Definition 2.6 (Qualitative Type Space) Suppose that

\[G=\langle N, (S_i)_{i\in N}, (u_i)_{i\in N}\rangle\]is a game in strategic form. A qualitative type space for \(G\) is a tuple \(\langle S, (T_i)_{i\in N}, (\lambda_i)_{i\in N}\rangle\) where for each \(i\in N \), \(T_i\) is a nonempty set (elements of which are called types), \(S\) is the set of strategy profiles in \(G\), and

\[ \lambda_i:T_i\rightarrow \wp(T_{-i} \times S_{-i}). \]where \(T=\times_i T_i\), \(T_{-i}=\times_{j\ne i} T_j\), \(S=\times_i S_i\), and \(S_{-i}=\times_{j\ne i} S_j\).

So, for each player \(i\in N\), the \(\lambda_i\) function assigns each type \(t\in T_i\) a set of tuples describing both the types and the choices of the other players.

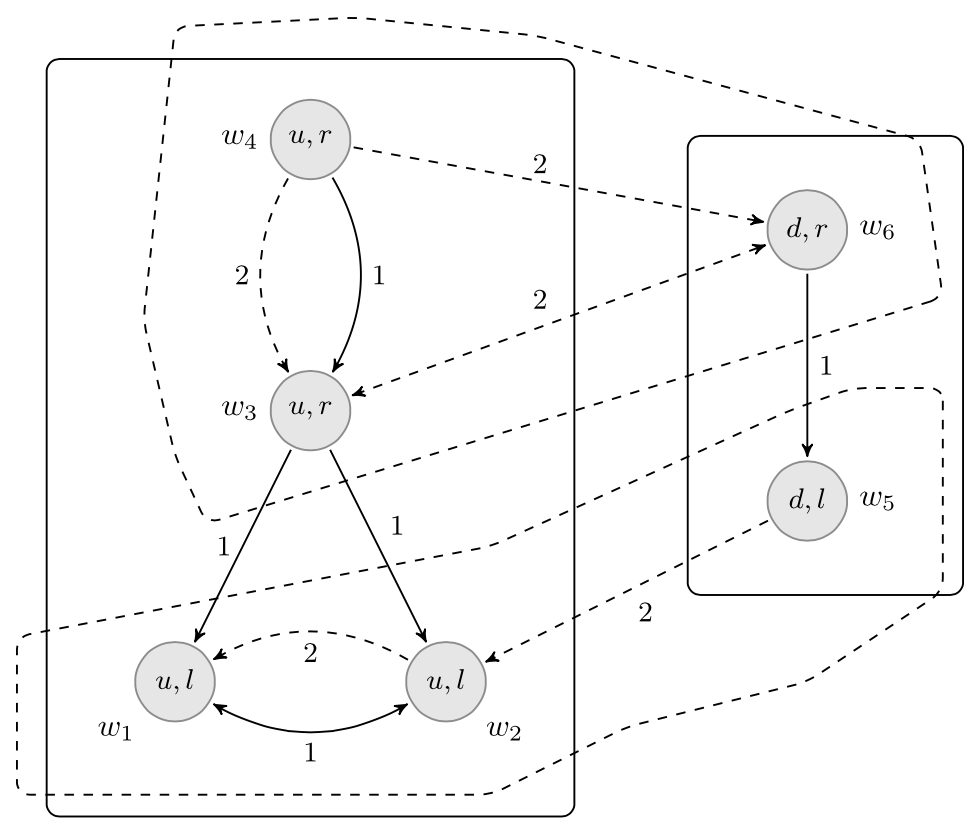

Consider the initial example of the coordination game between Ann (a) and Bob (b) pictured in Figure 1. In this case, the set of strategy profiles is \(S=\{(u,l),(d,l),(u,r),(d,r)\}\). Then, \(S_{-b} = S_a=\{u,d\}\) and \(S_{-a}=S_b = \{l,r\}\). Suppose that there are two types for each player: \(T_a=\{t_1,t_2\}\) and \(T_b=\{t'_1,t'_2\}\). Suppose that the functions \(\lambda_a\) and \(\lambda_b\) are defined as follows:

- \(\lambda_a:T_a\rightarrow \wp(T_b\times S_b)\) is the function

where

\(\lambda_a(t_1) = \{(t'_1, l), (t'_2, l)\}\) and \(\lambda_a(t_2) = \{(t'_2, l)\}\) - \(\lambda_b:T_b\rightarrow \wp(T_a\times S_a)\) is the function

where

\(\lambda_b(t'_1) = \{(t_1, u)\}\) and \(\lambda_b(t'_2) = \{(t_2, d)\}\)

A convenient way to represent these functions is as follows:

| l | r | ||

|---|---|---|---|

| \(\lambda_a(t_{1})\) | \(t'_1\) | 1 | 0 |

| \(t'_2\) | 1 | 0 |

| l | r | ||

|---|---|---|---|

| \(\lambda_a(t_{2})\) | \(t'_1\) | 0 | 0 |

| \(t'_2\) | 1 | 0 |

| u | d | ||

|---|---|---|---|

| \(\lambda_b(t'_{1})\) | \(t_1\) | 1 | 0 |

| \(t_2\) | 0 | 0 |

| u | d | ||

|---|---|---|---|

| \(\lambda_b(t'_{2})\) | \(t_1\) | 0 | 0 |

| \(t_2\) | 0 | 1 |

Figure 8

where for each \(i\in \{a, b\}\) and for each \(t\in T_i\), a 1 in the \((t',s)\) entry of the above matrices means that \((t',s)\in\lambda_i(t)\). Before giving the formal definition of beliefs in a qualitative type space, we make the following observations about the above type structure:

-

Both of Ann’s types believes that Bob will choose \(l\): In both matrices, \(\lambda_a(t_1)\) and \(\lambda_a(t_2)\), the only places where a 1 appears is under the \(l\) column.

-

The type \(t_2\) believes that Bob believes that she will choose \(d\):The only row assigned a 1 by the type \(t_2\) is \(t'_2\), and the only column assigned a 1 by \(t'_2\) is \(d\).

-

Both of Bob’s types believe that Ann believes that he will choose \(l\). The only row assigned a 1 by \(t'_1\) is \(t_1\) and the only row assigned a 1 by \(t'_2\) is \(t_2\), and, as noted in item 1, both of Ann’s types believe that Bob will choose \(l\).

These informal observations can be made more precise using the following notions: Fix a qualitative type space \(\langle (T_i)_{i\in N}, (\lambda_i)_{i\in N}, S\rangle\) for a game \(G=\langle N, (S_i)_{i\in N}, (u_i)_{i\in N}\rangle\).

-

A (global) state, or possible world, is a tuple \((t_1,t_2,\ldots,t_n,s)\) where \(t_i\in T_i\) for each \(i\in N\) and \(s\in S\). It is convenient to write a possible world as: \((t_1,s_1,t_2,s_2,\ldots,t_n,s_n)\) where \(s_i\in S_i\) for each \(i\in N\).

-

Type spaces describe the players beliefs about the other players’ choices (and beliefs), so an event needs to be relativized to a player. An event for player \(i\) is a subset of \(\times_{j\ne i}T_j\times S_{-i}\).

-

Suppose that \(E\) is an event for player \(i\), then we say that \(i\) believes \(E\) at \((t_1,t_2,\ldots,t_n,s)\) provided that \(\lambda(t_i)\subseteq E\).

In the example above, an event for Ann is a subset of \(T_b\times S_b\) and an event for Bob is a subset of \(T_a\times S_a\). Then, we have the following formal versions of the above informal observations about the qualitative type space in Figure 8.

-

Let \(L=\{(t'_1, l), (t'_2, l)\}\) be the event that Bob chooses strategy \(l\). Then, since

\[\lambda_a(t_1) = \{(t'_1, l), (t'_2, l)\} \subseteq L\]and

\[\lambda_a(t_2) = \{(t'_2, l)\}\subseteq L,\]we have that

\[B_a(L)=\{(t_1, u), (t_1, d), (t_2, u), (t_2,d)\}\] -

Let \(D=\{(t_1, d), (t_2, d)\}\) be the event that Ann chooses strategy \(d\). Then

\[B_b(D)=\{(t'_2, l), (t'_2, r)\}.\]Since

\[\lambda_a(t_1)=\{(t'_1, l), (t'_2, l)\}\not\subseteq B_b(D)\]and

\[\lambda_a(t_2)=\{(t'_2, l)\}\subseteq B_b(D),\]we have that

\[B_a(B_b(D)) = \{(t_2,u), (t_2, d)\}.\] -

Recall that \(L=\{(t'_1, l), (t'_2, l)\}\) and

\[B_a(L) = \{(t_1, u), (t_1, d), (t_2, u), (t_2,d)\}.\]Then, it is straightforward to check that

\[B_b(B_a(L)) = \{(t'_1, l), (t'_2, r), (t'_2, l), (t'_2, r)\}\]

Note that the event \(B_a(L)\) is an event for Bob and the event \(B_b(D)\) is an event for Ann.

2.2.3 Probabilistic Type Spaces

A small change to the definition of a qualitative type space (Definition 2.6) allows us to represent probabilistic beliefs:

Definition 2.7 (Type Space) Suppose that \(G=\langle N, (S_i)_{i\in N}, (u_i)_{i\in N}\rangle\) is a game in strategic form. A type space for \(G\) is a tuple \(\langle S, (T_i)_{i\in N}, (\lambda_i)_{i\in N}\rangle\) where for each \(i\in N \), \(T_i\) is a nonempty set (elements of which are called types), \(S\) is the set of strategy profiles in \(G\), and

\[ \lambda_i:T_i\rightarrow \Delta(\times_{j\ne i} T_j\times S_{-i}). \]Types and their associated image under \(\lambda_i\) encode the players’ hierarchies of beliefs. For instance, if \(t\in T_i\), then \(\lambda_i(t)\) is a probability on \(T_{-i}\times S_{-i}\), and so \(\textrm{marg}_{S_{-i}}\lambda_i(t)\in \Delta(S_{-i})\) is \(i\)’s first-order belief. For a type \(t\in T_i\), let \(p^1_t\) denote the first-order belief for player \(i\) associated with \(t\) (i.e., \(p^1_t = \textrm{marg}_{S_{-i}} \lambda_i(t)\)). We illustrate how to define higher-order beliefs with an example.

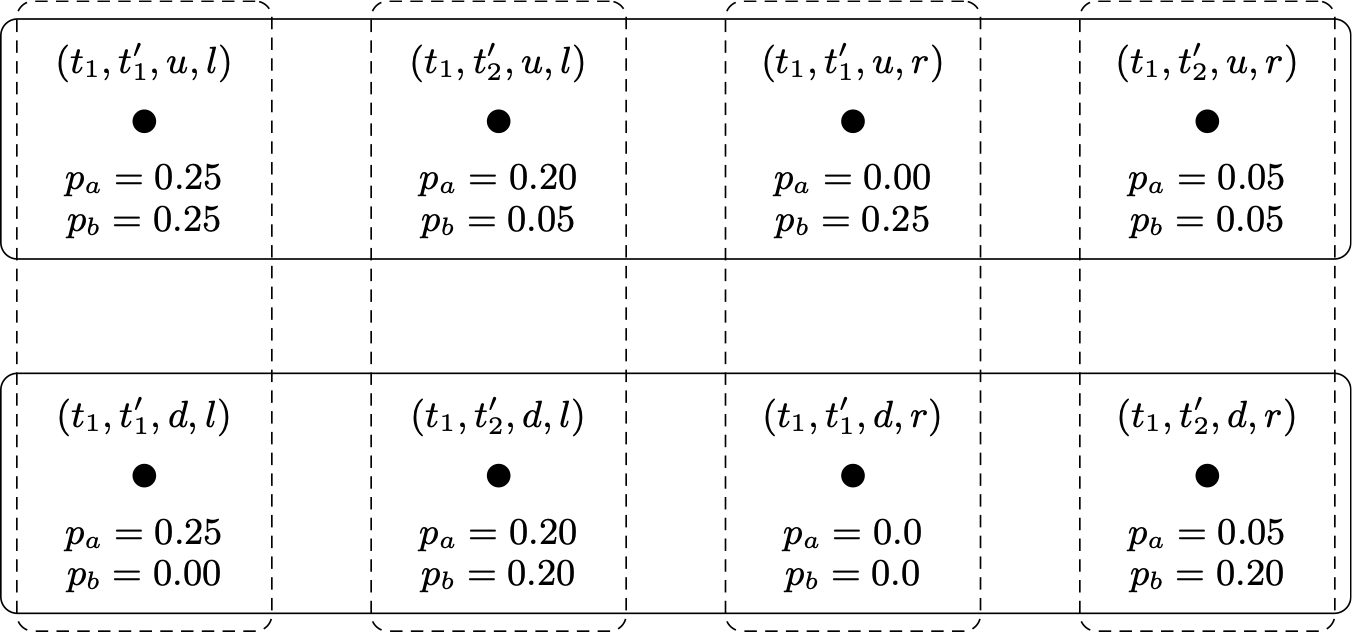

Example 2.8

Returning again to our running example games where Ann (a) has two available actions \(\{u,d\}\) and Bob (b) has two available actions \(\{l,r\}\). Suppose that there is one type for Ann \(T_a=\{t_1\}\) and two types for Bob \(T_b=\{t_1', t'_2\}\) with the definition of \(\lambda_a\) and \(\lambda_b\) given in the following matrices:

| l | r | ||

|---|---|---|---|

| \(\lambda_a(t_1)\) | \(t^\prime_1\) | 0.5 | 0 |

| \(t^\prime_2\) | 0.4 | 0.1 |

Figure 9: Ann’s beliefs about Bob

| u | d | ||

|---|---|---|---|

| \(\lambda_b(t^\prime_1)\) | \(t_1\) | 1 | 0 |

| u | d | ||

|---|---|---|---|

| \(\lambda_b(t^\prime_2)\) | \(t_1\) | 0.2 | 0.8 |

Figure 10: Bob’s belief about Ann

The first- and second-order beliefs for the players encoded in the above type space are:

-

For Ann, we have that \(p^1_{t_1}\) is the probability with

\[p^1_{t_1}(l) = \lambda_a(t_1)(l,t^\prime_1) + \lambda_a(t_1)(l,t^\prime_2) = 0.5 + 0.4 = 0.9\]and

\[p^1_{t_1}(r) = \lambda_a(t_1)(r,t^\prime_1) + \lambda_a(t_1)(r,t^\prime_2) = 0 + 0.1 = 0.1.\]For Bob, we have that \(p^1_{t^\prime_1}\) is the probability with \(p^1_{t^\prime_1}(u) = 1.0\) and \(p^1_{t^\prime_1}(d) = 0.0\) and \(p^1_{t^\prime_2}\) is the probability with \(p^1_{t^\prime_2}(u) = 0.2\) and \(p^1_{t^\prime_2}(d) = 0.8\)

-

Ann considers both of Bob’s types equally probable (0.5): Ann’s probability that Bob is of type \(t^\prime_1\) is

\[\lambda_a(t_1)(l, t^\prime_1) + \lambda_a(t_1)(r, t^\prime_1) = 0.5 + 0 = 0.5\]and Ann’s probability that Bob is of type \(t^\prime_2\) is

\[\lambda_a(t_1)(l, t^\prime_2) + \lambda_a(t_1)(r, t^\prime_2) = 0.4 + 0.1 = 0.5.\]This means that she believes that it is equally likely that Bob is certain she plays \(u\) as Bob believing with probability 0.2 that she plays \(u\). More precisely, the second-order probability for \(t_1\), denoted \(p^2_{t_1}\), is defined as follows:

- \(p^2_{t_1}(l, p^1_{t^\prime_1}) = 0.5\),

- \(p^2_{t_1}(r, p^1_{t^\prime_1}) = 0.0\),

- \(p^2_{t_1}(l, p^1_{t^\prime_2}) = 0.4\), and

- \(p^2_{t_1}(u, p^1_{t^\prime_2}) = 0.1\).

- Since there is a unique type for Ann, Bob is certain that Ann is of type \(t_1\), and so Bob’s second-order (and higher-order) probabilities are based only on his first-order beliefs.

The above type space is a very compact description of the players’ beliefs. It is not hard to see that every type space can be transformed into an epistemic-probability model. Suppose that \(\cT= \langle S, (T_i)_{i\in N}, (\lambda_i)_{i\in N}\rangle\) is a type space for a game \(G=\langle N, (S_i)_{i\in N}, (u_i)_{i\in N}\rangle\). We can transform \(\cT\) into the epistemic-probability model \(\cM^\cT = \langle W^\cT, (\sim^\cT_i)_{i\in N}, (P^\cT_i)_{i\in N}\rangle\), where

- \(W^\cT = T\times S\), where \(T=\times_{i\in N}T_i\) and \(S=\times_{i\in N} S_i\)

- For \((t,s), (t', s')\in W^\cT\), we have \((t,s)\sim^\cT_i (t',s')\) if and only if \(t_i=t'_i\) and \(s_i = s'_i\)

-

\(P^\cT_i\) is the function where for \((t,s)\in W^\cT\), \(P^\cT_i(t,s)\) is the following probability:

\[P^\cT_i(t,s)(t',s') = \begin{cases} \lambda_i(t)(t'_{-i}, s'_{-i}) & \mbox{ if \((t',s')\in [(t,s)]_i\)}\\ 0 & \mbox{ otherwise} \end{cases}\]

It is immediate that \(\cT\) and \(\cM^\cT\) are equivalent game models in the sense that they generate the same hierarchies of beliefs. To illustrate the above construct, the following epistemic-probability model is \(\cM^\cT\), where \(\cT\) is the type space from the above Example 2.8. (In the the figure below, rather than representing the function \(P_i\) for each player \(i\in \{a,b\}\), prior probabilities \(p_a\) and \(p_b\) are given and the functions \(P_a\) and \(P_b\) are derived by conditioning as explained in Section 2.1.2.)

Figure 11 [An extended description of figure 11 is in the supplement.]

Some simple (but instructive!) calculations shows that the above epistemic-probability model describes the same beliefs as the type space from the above Example 2.8. Constructing and equivalent type space from an epistemic-probability model is more complicated. See Galeazzi & Lorini 2016 and Bjorndahl & Halpern 2017 for a discussion (cf. also Fagin, Geanakoplos, et al. 1999; Brandenburger & Dekel 1993; Heifetz & Samet 1998; and Klein & Pacuit 2014 for further discussions about the relationship between type spaces and epistemic-probability models).

2.2.4 Rational Choice in Type Spaces

A state in a type space is a pair \((t, s)\) where \(t\) lists the type for each player and \(s\) is a strategy profile in the underlying game. Suppose that

\[G=\langle N, (S_i)_{i\in N}, (u_i)_{i\in N}\rangle\]is a game in strategic form and

\[\langle S, (T_i)_{i\in N}, (\lambda_i)_{i\in N}\rangle\]is a type space for \(G\).

For each state \((t,s)\), for each player \(i\in N\), \(t\in T_i\), player \(i\)’s first-order belief \(p^1_{t_i}\in \Delta(S_{-i})\) is defined as \(p^1_{t_i} = \textrm{marg}_{S_{-i}} \lambda(t_i)\). When the sets of types and strategies are finite, this means that \(p^1_{t_i}\) is defined as follows:

\[ p^1_{t_i}(s_{-i})= \sum_{t_{-i}\in T_{-i}}\lambda_i(t_i)(s_{-i},t_{-i}) \]Given a state \((t,s)\), we say that \(i\) is rational in \((t,s)\) when \(s_i\) maximizes player \(i\)’s expected utility with respect to \(i\)’s first-order beliefs \(p_{t_i}^1\) (for a strategy \(x\) and probability \(p\), we write \(\EU(x, p)\) for the expected utility of \(x\) with respect to \(p\)). That is, \(i\) is rational in \((t,s)\) when for all \(s'\in S_i\),

\[\begin{align*} \EU(s_i, p^1_{t_i}) & = \sum_{s'_{-i} \in S_{-i}} p^1_{t_i}(s'_{-i})u_i(s_i, s'_{-i})\\ & \ge\sum_{s'_{-i} \in S_{-i}} p^1_{t_i}(s'_{-i})u_i(s', s'_{-i}) \\ & = \EU(s', p^1_{t_i}) \\ \end{align*} \]Let \(\Rat\subseteq T\times S\) be the set of states in which all players are rational:

\[\Rat = \{(t,s) \mid (t,s)\in T\times S\mbox{ and \(i\) is rational in \((t,s)\) for all \(i\in N\) }\}\]Given the set \(\Rat\) of states in which all players are rational, we define the following sets:

- \(\Rat_i = \{(t_i, s_i)\mid (t,s)\in \Rat\}\)

- \(\Rat_{-i} = \{(t_{-i}, s_{-i})\mid (t,s)\in \Rat\}\)

To illustrate the above definitions, consider the game in Figure 4 and the type space from the above Example 2.8. The following calculations show that \(u\) maximizes expected utility for player a given her beliefs defined by \(t_1\):

\[ \begin{align*} \EU(u,p^1_{t_1}) &= p^1_{t_a}(l)u_a(u,l) + p^1_{t_a}(r)u_a(u,r)\\ & = [\lambda_a(t_1)(l, t^\prime_1) + \lambda_a(t_1)(l ,t^\prime_2)]\cdot u_a(u,l) \\ &\qquad {} + [\lambda_a(t_1)(r, t^\prime_1) + \lambda_a(t_1)(r,t^\prime_2)] \cdot u_a(u,r) \\ &= (0.5 + 0.4) \cdot 3 + (0 + 0.1)\cdot 0 \\ &= 2.7 \end{align*} \] \[ \begin{align*} \EU(d,p^1_{t_1}) &= p^1_{t_a}(l)u_a(d,l) + p_{t_a}(r)u_a(d,r)\\ & = [\lambda_a(t_1)(l,t^\prime_1) + \lambda_a(t_1)(l,t^\prime_2)]\cdot u_a(d,l) \\ & \qquad {} + [\lambda_a(t_1)(r, t^\prime_1) + \lambda_a(t_1)(r, t^\prime_2)] \cdot u_a(d,r) \\ &= (0.5 + 0.4) \cdot 0 + (0 + 0.1)\cdot 1 \\ &= 0.1 \end{align*}\]Since \(\EU(u, p^1_{t_1}) > \EU(d, p^1_{t_1})\), \(u\) maximizes expected utility for the type \(t_1\). Similar calculations show that \(\Rat = \{(t_1, t^\prime_1, u, l), (t_1, t^\prime_2, u, r)\}.\)

2.3 Common Knowledge and Belief

A standard assumption in game theory is that the players in a game are rational and that it is commonly known or commonly believed that the players are rational. Both game theorists and logicians have extensively discussed different notions of knowledge and belief for a group, such as common knowledge and belief. For more information and pointers to the relevant literature, see Vanderschraaf & Sillari (2005 [2022]); Fagin, Halpern, et al. (1995: ch. 6); and Lederman (2018a).

Suppose that \(G\) is a game with players \(N\) and that \((K_i)_{i\in N}\) are knowledge operators from some epistemic(-probability) model for \(G\). We define the following notions of group knowledge:

-

An event \(E\) is mutual knowledge if all of the players know that \(E\): For each event \(E\) let

\[ K(E)\ \ :=\ \ \bigcap_{i\in N}K_i(E). \] -

For \(k\ge 0\), the kth-level knowledge of an event \(E\) is defined recursively as follows:

\[ K^0(E)=E \qquad{\text{and for \(k\ge 1\),}}\quad K^k(E)=K(K^{k-1}(E)) \] -

If \(E\) is common knowledge for a group of players, then not only does every player know that \(E\) is true, but this fact is completely transparent to all the players. Then, following Aumann (1976), common knowledge of \(E\) is defined as the following infinite conjunction:

\[ \CK(E)=\bigcap_{k\ge 0}K^k(E) \]Unpacking the definitions, we have

\[ \CK(E)=E\cap K(E) \cap K(K(E)) \cap K(K(K(E)))\cap \cdots \]Consult Barwise 1988, Heifetz 1999a, Cubitt & Sugden 2014, and Lederman 2018b for a discussion of alternative definitions of common knowledge.

The approach to defining common knowledge outlined above can be viewed as a recipe for defining common (robust/strong) belief (simply replace the knowledge operators \(K_i\) with the appropriate belief operator). For instance, the definition of common belief in a type space follows a similar pattern.

Suppose that \(G=\langle N, (S_i)_{i\in N}, (u_i)_{i\in N}\rangle\) is a game in strategic form and \(\langle S, (T_i)_{i\in N}, (\lambda_i)_{i\in N}\rangle\) is a type space for \(G\). Suppose that \(i\in N\) and that \(E\subseteq S_{-i}\times T_{-i}\) is an event for player \(i\). We say that a type for player \(i\) believes that \(E\) when the type assigns probability 1 to \(E\) (in the epistemic game theory literature, it is standard to use “belief” for probability 1 rather than “certainty”). Let \(B_i(E)\) be the set of strategy-type pairs for player \(i\) such that the type assigns probability 1 to \(E\):

\[B_i(E) = \{(s_i, t_i)\mid (s,t)\in S\times T\mbox{ and } \lambda_i(t_i)(E) = 1\}\]Suppose that \((E_i)_{i\in N}\) is a sequence of event where for each \(i\in N\), \(E_i\subseteq S_{-i}\times T_{-i}\). Let \(E=\times_{i\in N} E_i\) and \(E_{-i} = \times_{j\ne i} E_j\). Then, mutual belief of \(E\), denoted \(B(E)\), is defined as follows:

\[B(E) = \times_{i\in N}B_i(E_{-i})\]Note that \(B(E)_{-i} = \times_{j\ne i}B_j(E_{-j})\) and, after applying the obvious transformation, we can treat this set as a subset of \(S_{-i}\times T_{-i}\). Thus, we can abuse notation and write \(B_i(B(E)_{-i})\) for the set of strategy-type pairs such that the type assigns probability 1 to the mutual belief of \(E\). The kth-level belief of \(E\) is defined as above:

\[ B^1(E)=B(E) \qquad{\text{and for \(k\geq 2\),}}\quad B^k(E)=B(B^{k-1}(E)) \]Finally, common belief of \(E\) is also defined as above:

\[\CB(E)=\bigcap_{k\geq 1}B^k(E)\]See Bonanno (1996) and Lismont & Mongin (1994, 2003) for a discussion of the logic of common belief. Although we do not discuss it in this entry, a probabilistic variant of common belief was introduced by Monderer & Samet (1989).

2.4 A Paradox of Self-Reference in Game Models

The first step in any epistemic analysis of a game is to describe the players’ knowledge and beliefs using (a variant of) one of the models introduced in Section 2. As we noted in Section 2.1.1, there will be statements about what the players know and believe about the game situation and about each other that are commonly known in some models but not in others:

In any particular [type] structure, certain beliefs, beliefs about belief, …, will be present and others won’t be. So, there is an important implicit assumption behind the choice of a [type] structure. This is that it is “transparent” to the players that the beliefs in the type structure—and only those beliefs—are possible ….The idea is that there is a “context” to the strategic situation (e.g., history, conventions, etc.) and this “context” causes the players to rule out certain beliefs. (Brandenburger & Friedenberg 2010: 801)

Ruling out certain configurations of beliefs constitute substantive assumptions about the players’ reasoning during the decision making process. In other words, substantive assumptions are about what the players know and believe about the game and each other over and above what is intrinsic to the mathematical representation of the players’ knowledge and beliefs. It is not hard to see that one always finds substantive assumptions in finite game models: Given a countably infinite set of basic facts (e.g., atomic propositions in a propositional language), in any finite game model it will be common knowledge that some logically consistent combination of these basic facts are not realized, and a fortiori for logically consistent configurations of (higher-order) beliefs/knowledge about these basic facts. On the other hand, monotonicity of the belief/knowledge operator is a typical example of an assumption that is not substantive. More generally, there are no models of games, as we defined in Section 2, where it is not common knowledge that the players believe all the logical consequences of their beliefs.[11]

Are there models that make no, or at least as few as possible, substantive assumptions? This question has been extensively discussed in epistemic game theory—see, for instance, Dekel & Gul (1997), Aumann (1999a, 1999b), and Samuelson (1992). Intuitively, a model without any substantive assumptions must represent all possible states of (higher-order) knowledge and beliefs of the players. Whether such a model exists will depend, in part, on how the players’ informational attitudes are represented—e.g., as probability measures or set-valued knowledge/belief functions.

There are different ways to understand what it means for a model to minimize the substantive assumptions about what the players know and believe about each other and the game. We do not attempt a complete overview of this interesting literature here (see Brandenburger & Keisler (2006: sec. 11) and Siniscalchi (2008: sec. 3) for discussion and pointers to the relevant results). One approach considers the space of all (Harsanyi type-/epistemic-/epistemic-probability-) models and tries to find a single model that, in some suitable sense, “contains” all other models. Such a model, often called called a universal structure (or a terminal object in the language of category theory), if it exists, incorporates any substantive assumption that an analyst can imagine. A universal structure has been shown to exists for probabilistic type spaces (Mertens & Zamir 1985; Brandenburger & Dekel 1993). However, there is no similar universal structure for epistemic models (Heifetz & Samet 1998; Fagin, Geanakoplos, Halpern, & Vardi 1999; Meier 2005), with some qualifications regarding the language that is used to describe the players’ knowledge (Heifetz 1999b; Roy & Pacuit 2013).

A second approach takes an internal perspective by asking whether, for a fixed set of states or types, the players are making any substantive assumptions about what their opponents know or believe. The idea is to identify (in a given model) a set of possible conjectures about the players. For example, in an epistemic model based on a set of states \(W\) this might be the set of all subsets of \(W\) or the set definable subsets of \(W\) in some suitable logical language. A space is said to be complete if each agent correctly takes into account each possible conjecture about her opponents. A simple counting argument shows that there cannot exist a complete structure when the set of conjectures is all subsets of the set of states (Brandenburger 2003). However, there is a deeper result which we discuss below.

The Brandenburger-Keisler Paradox

Adam Brandenburger and H. Jerome Keisler (2006) introduce the following Russell-style paradox. The statement of the paradox involves two concepts: beliefs and assumptions. An assumption for a player is that player’s strongest belief: it is a set of states that implies all other beliefs at a given state. We will say more about the interpretation of an assumption below. Suppose there are two players, Ann and Bob, and consider the following description of beliefs.

- (S)

- Ann believes that Bob assumes that Ann believes that Bob’s assumption is wrong.

A paradox arises by asking the question:

- (Q)

- Does Ann believe that Bob’s assumption is wrong?

To ease the discussion, let \(C\) be Bob’s assumption in (S): that is, \(C\) is the statement:

- (C)

- Ann believes that Bob’s assumption is wrong.

So, (Q) asks whether \(C\) is true or false. We will argue that \(C\) is true if, and only if, \(C\) is false.

Suppose that \(C\) is true. Then, Ann believes that Bob’s assumption is wrong, and, by (positive) introspection, she believes that she believes this. That is, Ann believes that \(C\) is correct. Furthermore, according to (S), Ann believes that Bob’s assumption is \(C\). So, Ann, in fact, believes that Bob’s assumption is correct (she believes Bob’s assumption is \(C\) and that \(C\) is correct). So, \(C\) is false.

Suppose that \(C\) is false. So Ann does not believe that Bob’s assumption is wrong. That is, Ann does not believe that \(C\) is wrong. By (negative) introspection, Ann believes that she does not believe that \(C\) is wrong. Now, by (S), Ann believes that Bob’s assumption is that she believes that \(C\) is wrong and Ann believes that she does not believe that \(C\) is wrong. Thus, Ann believes that Bob’s assumption is wrong. So, \(C\) is true.

Brandenburger and Keisler formalize the above argument in order to prove a very strong impossibility result about the existence of so-called assumption-complete structures. We need some notation to state this result. It will be most convenient to work in qualitative type spaces for two players (Definition 2.6). A qualitative type space for two players \(N=\{a, b\}\) is a structure (the set of states is not important for this argument, so we leave them out) \(\langle (T_a, T_b), (\lambda_a, \lambda_b)\rangle\) where